Contents

Introduction

Retry schedules is the last important concept you need to understand to work with evQueue. As often, the best way to understand is an example.

Let's imagine that every day you have to fetch a file from an FTP server. Suppose that this FTP server doesn't work very well and often give you connection errors.

You have scheduled a task 'fetch_from_ftp' every morning, but you still have to check if everything went well or not. If you have an error, you have to launch it again manually to ensure the file is fetched.

That's where retry schedules enter the scene. You can configure evQueue to automatically re-launch a failed task a certain number of times. If at any time the task succeed, it will be considered successful and the workflow will go on. Ift all executions fail, the task will be considered failed and the workflow will end (with error status).

The resulting workflow can be found here : lame_ftp.xml.

Create the schedule

So, first of all, why is it called a 'retry schedule'? Isn't it simply a number of retries?

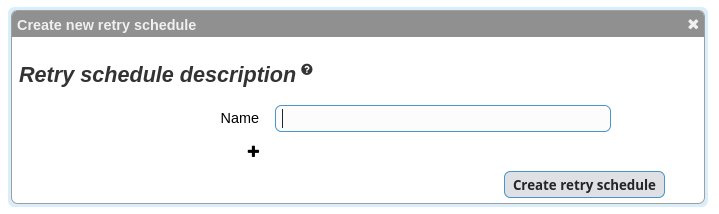

Well, not exactly, this is a bit more powerful because you can configure a variable time between retries. This might become clearer on the interface, so go to the Setting -> Retry schedules screen and add your first retry schedule ( icon).

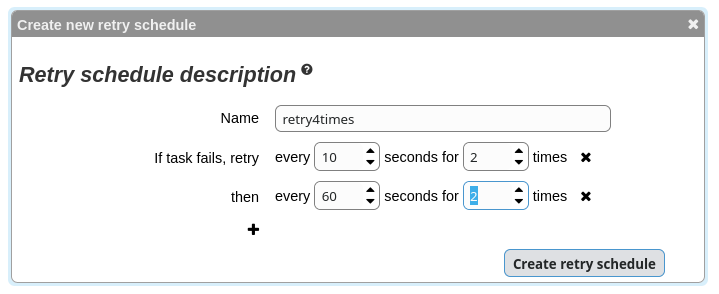

Name it retry4times and configure it as follow:

This way, we will have a maximum of 4 retries for a period of 140 seconds (2 times 10 seconds + 2 times 60 seconds).

The workflow

Create a new worklow and name it lame_ftp.

We'll simulate our FTP server. For demonstration purposes, we'll use the following script:

#!/bin/bash exit $(( RANDOM % 2 ))

As you can see, this is a very lame server because it fails half the time. That's when retry schedules come in handy!

Create a task (script type) with this script and name it lame_ftp.

Go to the 'Queue & retry' tab. Choose the 'retry4times' schedule and close.

You're done, this one was a simple one!

Trying the retry

This time, testing will be more tricky because of the random result. If you launch a new instance and the FTP works, it will stop immediately and return a normal status.

If you launch it several times, you will see that it will run longer and display a new status

This means that a task has failed, but that it will be retried. Normally, the workflow should end with a good status most of the time (probability says 15 times out of 16).

If you launch it enough times, you should be able to see it fail (if our lame FTP server fails 4 consecutive times).

That's it, now you can let the scheduled workflow work every morning without launching it manually!

Also note that you could add other tasks after this first one, for example a script treating the data fetched from the FTP server.